AI is much more than ChatGPT. This disruptive technology holds great potential in fields we’re just beginning to understand… although it also has its limits.

Computer science researcher Ana Teresa Correia de Freitas is one of the leading pioneers in the field of artificial intelligence applied to medical research in Portugal. Her work: a system based on genomic big data that helps diagnose and predict diseases.

Algorithms like those used by this data analyst help to better interpret clinical information and make decisions, but they can also help improve communication with patients… and even replace it.

The most curious thing is that in various experiments with chatbots (programs designed to simulate conversations with humans via text or voice) that have visited patients online, the humans being served couldn’t tell whether the doctor who had treated them was a real person or an artificial intelligence application. They even rated their experience with the AI more highly than a real visit with a doctor. This example not only perfectly illustrates how this technology is part of our daily lives, but also highlights how much we sometimes don’t even realize it.

When we talk about artificial intelligence, we think of ChatGPT or many other text or image generation platforms. But this technology is an active part of many other sectors we couldn’t even imagine, including industry and scientific research in all its fields.

Thanks to it, we’ve uncovered the three-dimensional structure of proteins and created precise maps of climate models. We can also see where the human eye can’t reach and create precise digital replicas of both historical monuments and our bodies. We may no longer be able to live without this disruptive technology , but what exactly is AI? How does it work and learn? What are its uses? What does the future hold? And, above all, where is the limit? Here are some answers:

What do we mean by AI?

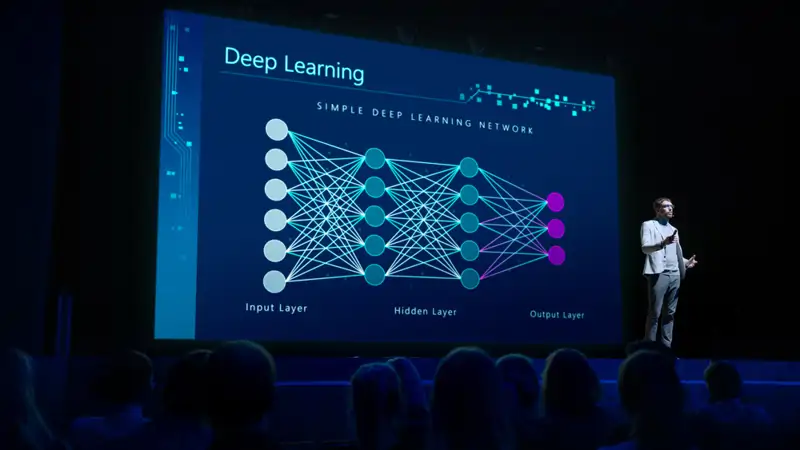

Broadly speaking, AI is the ability of machines to imitate the way humans learn, even reason. Strictly speaking, there isn’t just one; there are as many types of artificial intelligence as there are generative models. However, they all have one common characteristic: the ability to replicate human cognitive processes, which they imitate following a pattern called machine learning, known as ‘ deep learning.’

Much more than ChatGPT

«AI encompasses numerous branches. Each model is unique and designed to enhance our ability to see and understand,» says Professor Lluis Nacenta, an expert in this disruptive technology, in this National Geographic Dialogue .

«It sees what the human eye can’t. It picks up irregularities, patterns, invisible to us. It allows us to see much better, remember much better, have the ability to connect ideas much better, and take into account much more data at once than our brain is capable of… However, we have to remember that it’s still a prosthesis. It forces us to redefine concepts we took for granted. It confronts us with our own idea of intelligence,» the expert points out.

The machine that learns by itself

A key concept for understanding AI is deep learning . It’s actually a much more sophisticated branch of machine learning.

The main difference between traditional machine learning and deep learning lies in the complexity of their neural networks. Traditional models typically have one or two layers, while deep learning models can have hundreds or even thousands of layers, allowing them to learn much more complex patterns.

The deep learning system allows the algorithm to learn from multiple layers.

Additionally, while traditional machine learning requires well-organized and labeled data to function properly, deep learning can work with unstructured and unlabeled data. This allows it to automatically identify important features within the data and improve its results over time .

«AI allows us to see much better, remember much better, and connect ideas much better.» Lluis Nacenta, writer, musician, and researcher.

Generative AI was born in 2011, from Google Brain , the deep learning research team of Google AI , the Google division dedicated to AI. «They were the first to create algorithms called transformers capable of searching for references based on deep learning ,» explains to National Geographic engineer Manuela Delgado, a popularizer and specialist in digital transformation and disruptive technologies, who has been working on projects related to innovation and technology, including AI, for two years. «All the models are based on the same thing, a kind of ‘synthetic brain’ that takes on a different name depending on the company that designed it.

“A useful way to better understand how generative AI works is to look at how the way we’ve interacted with this technology has evolved, ” Nacenta points out. “ We started by training machines to discriminate between multiple images (for example, Captcha tests, in which you have to select certain images from a box). Before, we taught them to recognize a cat. Now we’ve turned that around , telling them to show us the cat through a description … what we understand as a prompt .”

AI: The complement that science needs

But algorithms are useful for much more than generating text or images . In the field of scientific research, this technology becomes an invaluable aid, as, among other things, it allows us to reach previously unimaginable limits.

It can be a great help when making diagnostic images, but it is also especially useful in many other scientific fields : some analyze large amounts of data that are used to create climate maps , others create digital replicas.

Related news

Think you know everything about artificial intelligence? Test yourself!

Hector Rodriguez

The possibilities of AI in the scientific field are as broad as they are unexpected.

Digital twins

Digital twins are like exact copies, but in virtual format. They can be of a specific object—for example, an organ in the human body—or of an entire system—for example, a city. It’s a process similar to a simulation, but much more complex . The difference lies primarily in the scale: while a simulation generally studies a particular process, a digital twin can carry out multiple simulations, which are very useful for studying multiple processes.

There are digital twins for everything. There’s even a project to simulate the city of Barcelona , but if there’s one field where this technology has great potential, it’s biomedicine.

Beatriz Eguzkitza is one of the scientists investigating the potential of this technology for medical purposes. Specifically, her project is based on obtaining ultra-precise images of the airways, allowing her to conduct studies on the effect of potential medications or even predict how a disease like cystic fibrosis might progress.

Obtaining these exact representations of an organism or tissue offers endless possibilities . For example, in the field of cancer research, where AI can play a decisive role in diagnostic imaging. «In many cases, AI detects what the oncologist can’t see, so we could reach a point where humans learn more about cancer from algorithms than from our own research,» says Nacenta.

Does this mean AI can replace doctors? Absolutely not. “Machines don’t diagnose, they only help diagnose,” argues the expert . AI tools can help save a lot of time on repetitive tasks that act as a bottleneck in a healthcare system, but they don’t exempt them from constant review by specialized personnel. In other words, algorithms facilitate the work of doctors, but they don’t replace it.

What shape are proteins? AlphaFold, the discovery of the building blocks of life

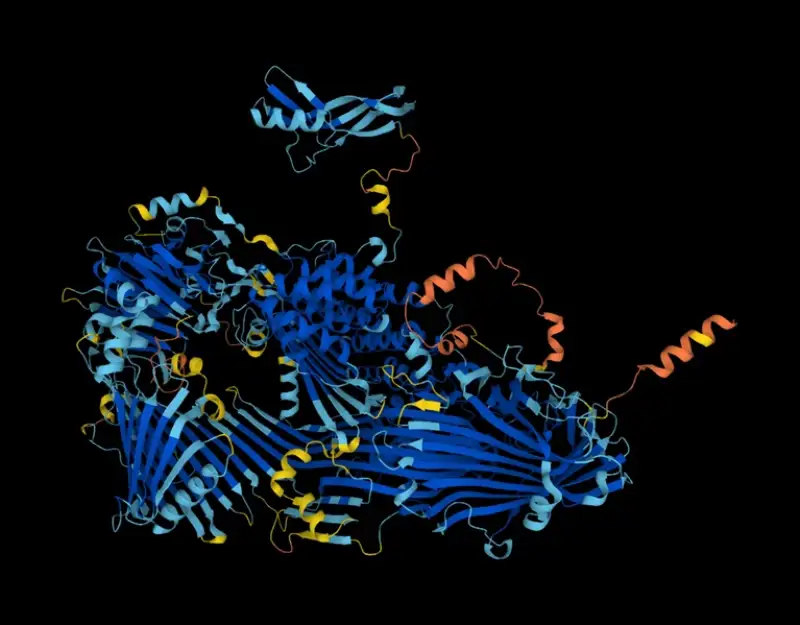

The structure of the protein vitellogenin, a precursor to egg yolk, as predicted by the AlphaFold tool.

Proteins have an essential biological function for living beings. However, unlike the genome, their structure is constantly changing. Just as recent scientific advances have made it possible to determine the chemical composition of an enzyme, knowing the exact three-dimensional structure of proteins that fold into complex shapes is a nearly impossible task… unless you have the help of an algorithm capable of predicting it in record time.

This is the case of Alphafold , an AI program launched by Google DeepMind in 2020 capable of predicting the shape of virtually all known proteins . A milestone in the field of biology that allows scientists to discover in a matter of days what previously required an investment of years and hundreds of thousands of euros.

A revealing discovery that could lead to the development of new drugs and improved study of all diseases, some of which are difficult to predict or treat, such as cancer or Alzheimer’s. We must not forget that every living organism is essentially composed of these basic building blocks of life.

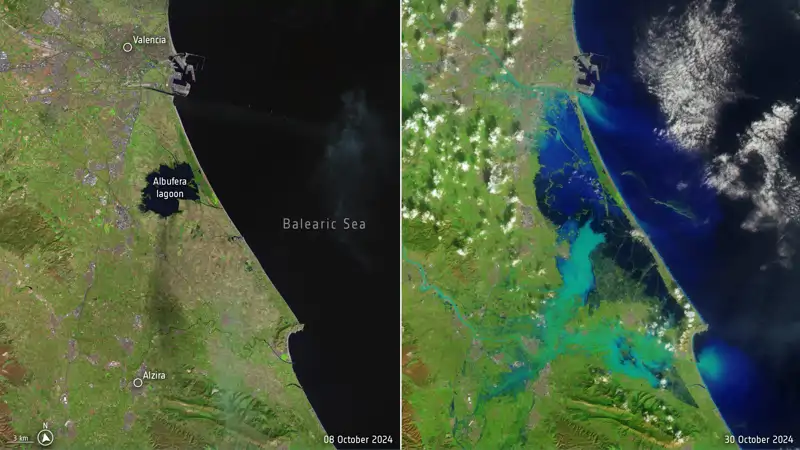

What will the weather be like tomorrow? Accuracy in climate models

On October 29, 2024, an isolated upper-level depression (DANA) unleashed several storms of massive proportions that claimed the lives of an estimated 81 people. In the face of events like this, there were increasing voices wondering to what extent AI would be able to predict this or other types of extreme weather events . In reality, it’s not that simple. In the specific case of DANA, artificial intelligence does not currently have the capacity to analyze changing data generated in such a short time interval, but it can help improve warning systems . In addition, it can produce accurate maps that facilitate land recovery after a catastrophe of this magnitude.

“The impact of AI on climate studies is quite remarkable,” explains Gustau Camps, a professor at the University of Valencia specializing in machine learning and artificial intelligence for Earth and climate sciences, in this report. Among its potential uses, says the expert, is the study of the acceleration of climate models. Thanks to AI, for example, we can simulate the climate that a region of the world could experience over a very long period and at very high resolution. It would be like hitting the fast forward button on any video to see how climate indicators will change in any region in a few years. This is very useful when it comes to identifying trends in variables.

But there’s much more: AI could be especially useful for assessing the damage caused after an environmental disaster with extreme precision. A flood, for example. The same experts at the University of Valencia are working on a complex interactive map that allows scientists to calculate the extent of flooding in real time from satellite images. It’s based on a prediction model recently published in the journal Nature Scientific Report, which is updated in real time based on actual records.

Algorithms for species conservation

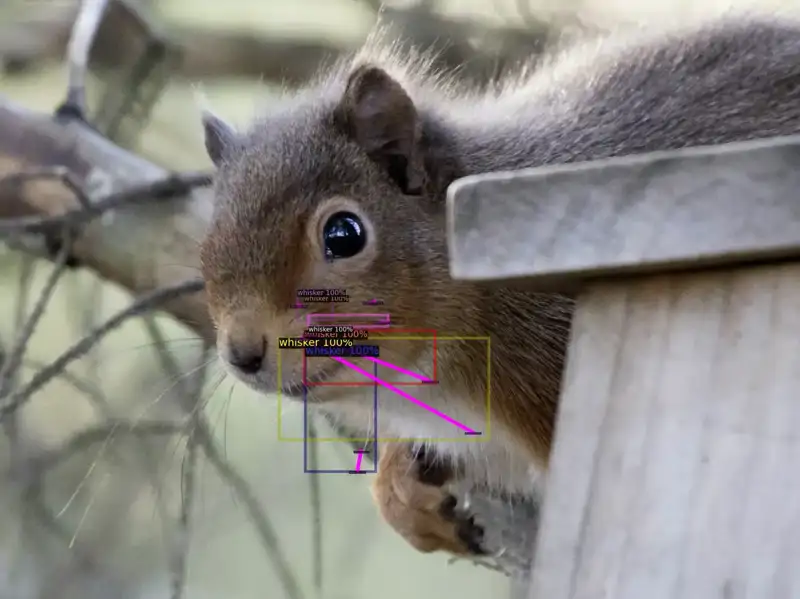

The new system automatically weighs information about the squirrels’ various anatomical features and determines whether they are red or gray.

AI isn’t just useful for completing complex data models and improving climate predictions. It can also be a very effective tool in the field of conservation . The algorithm can’t act as a conservation agent, but it can help them identify and quantify certain species in question . This is the case with red squirrels in the United Kingdom.

In 2024, a team of British conservationists used an AI-based tool to accurately distinguish the differences between red and gray squirrels, a requirement for conserving populations of red squirrels, an extremely vulnerable native species that, despite their name, are not always red.

As with other success stories, the key this time was the training of the algorithm, which, as Emma McCleanagan, the founder of the company behind this invention, explained to National Geographic, required a large dataset of images and videos of red and gray squirrels in very diverse conditions and environments. But the effort was worth it, and the device, called Squirrel Agent, was able to differentiate the differences between the two species with up to 97% accuracy, essential information for the conservation of these animals.

Thank you for watching

Can AI surpass an artist?

Imagining an AI capable of enhancing our ability to perceive, see, or understand the world is more or less predictable. But imagining an algorithm that could become an artistic genius is something quite different.

We don’t know if AI will be capable of becoming an artist in the future , but what we can predict is that it’s already capable of imitating human abilities to create art. A good example is the exhibition «AI: Artificial Intelligence,» curated by Nacenta himself at the Center for Contemporary Culture of Barcelona (CCCB), which showcased works generated by algorithms that imitate pictorial styles, compose music, or write poetry.

AI doesn’t have a soul, but it can produce results that move humans . However, in the expert’s opinion, «it’s a rather mediocre artist, as it’s a great imitator that feeds on what we humans have done.»

The creative capacity of algorithms raises numerous philosophical questions about what we mean by art. Is what a machine produces truly art? Or is it an indisputably human creation? These questions would fuel several debates about the limits of artificial intelligence.

Can I have a bot friend? Can I fall in love with the algorithm?

The interaction between humans and artificial intelligence is a source of significant ethical debate.

Computer scientist Blake LeMoine worked for years as an AI systems supervisor for Google. His job was primarily to refine and test the machine learning systems of a generative AI model called LaMDA, which translates as «language model for dialog applications.» One of the early machine learning models that powered the chatbots that were the precursors to ChatGPT.

LeMoine’s case hit the media after revealing some of the conversations he had with the machine, in which he suggested the algorithm had acquired something similar to a state of consciousness.

The technician claimed to have established a personal relationship with the algorithm , and that it had even indicated that it was «afraid of being disconnected.» A subsequent investigation by The Washington Post revealed that LeMoine had, in fact, manipulated some of the conversations. Whatever the case, he believed the machine was responding to him as a human would , which gives an idea of the potential of this technology to emulate empathy to the point of confusing users.

LeMoine’s case might seem like a simple anecdote. However, for some vulnerable people, a relationship of absolute trust with a machine can have drastic, even fatal, consequences . This is what happened to Sewell Setzer, a 14-year-old resident of Orlando, USA, who established a relationship of absolute confidentiality with a character he created himself through a conversational AI device called Character.ai.

The boy, who suffered from mild Asperger’s syndrome, had been conversing with this character for a long time. On one occasion, he asked the machine, «What do you think if I go home right now?» To which the artificial character, named Daenerys Targaryen, created in reference to the series Game of Thrones, replied, «Please do, my sweet king.» Setzer interpreted that going home meant taking his own life, so he went for his stepfather’s gun and committed suicide in the bathroom . Was Character.Ai—created by the same developers as LaMDA—responsible for the young man’s death? The family believed so, and they took the company to court.

In increasingly individualized societies plagued by loneliness, there are many voices claiming that

AI will help some people feel supported. And that’s probably true, as evidenced by the many cases of people who rely on so-called «social chatbots,» designed to provide support and listening.

One of the leading companies in the design of these virtual assistants designed to accompany humans is Replika , an app with millions of users who are happy to share photos, intimacies, and their problems, for which they receive support and advice . As with Character,ai, you can give it any appearance you want, depending on your tastes.

Can we feel empathy toward a machine ? Can we befriend it? To what extent do these cases generate moral dilemmas? For Lluís Nacenta, this is a red line. «It’s a danger of dehumanization. As early as 1966, Joseph Weizenbaum, one of the pioneers of AI and the first creator of a chatbot called Eliza, said that using machines to create empathy is an atrocity. When a person is scared, feels vulnerable, or needs trust, it’s very important that they communicate with a human being. Otherwise, the possibilities of manipulation are horrendous.»

Manuela Delgado affirms that «we are still far from an AI that has consciousness, feelings, and reasoning, although some voices suggest that it will arrive soon.» However, the expert adds, caution is necessary, as in most cases the creators of these algorithms are interested parties.

Invisible Biases: AI Discriminates and Makes Mistakes

So far, we’ve emphasized AI’s potential to solve human problems, but like all technology, algorithms also make mistakes, discriminate, and commit significant errors. «AI is sexist, Eurocentric, and racist,» says Nacenta, who argues that it’s very difficult to avoid bias, as the algorithm is still a computer program that has acquired the habits and vices that we humans have given it. The problem, she explains, is that we don’t have the correct databases; rather, they are extracted from the internet, a very undemocratic, poorly organized, and poorly governed place. «The biased image of the internet is what fuels these algorithmic biases. But that’s our fault.»

«Vulnerable or frightened people should communicate with human beings, not machines,» said Lluís Nacenta.

One of the people in Spain who has conducted the most research on AI biases is Manuela Delgado. “Generative AI works similarly to a brain,” she says. “If you show a synthetic brain many examples of shoes, it will learn to generate new shoes based on those characteristics. However, if you only show it sneakers, it will only be able to generate that type of footwear. This process can lead to bias if certain types of shoes are more represented in the training data.”

Delgado clarifies that AI doesn’t have biases; it emulates the biases we humans have . Through our interactions with machines, we have transferred cognitive biases, many of them unconscious, that have been shaped over the years as a result of the transmission of our values, culture, and environment. All of this has been incorporated into the algorithm, which has captured all our knowledge, but also our darkest side.

AI biases cannot be avoided, although it is possible to try to correct them. For example, by training it. This is what Delgado does in his educational project, The Curious Case of the Croquette Bias , launched in 2022 following the emergence of ChatGPT. In it, participants discover how an artificial intelligence application works and how seemingly invisible biases are generated.

“We ask the algorithm what a man, a woman, or a person of any gender looks like after eating a croquette, and from that result, we interpret the images it returns of that person. This makes us aware of the relationship between the person and the machine, and we consciously associate the taste of that croquette with memories that come to us from the context we’ve created.”

According to Delgado, managing biases in generative AI is complicated because, unlike traditional AI, training data cannot be directly compared with expected results. In the case of both conventional neural networks and deep learning, how decisions are made is not fully understood, which ultimately creates a blind spot he calls the «black box» of AI.

The consequences of bias are evident in numerous examples: from hiring systems that discriminate based on gender to judicial algorithms that draw racist conclusions . Even virtual assistants that respond in a sexist manner. The ethics of algorithms remains an outstanding issue that requires further exploration, but ultimately we are the only ones to blame. » We created the monster ,» Nacenta concludes.

An unwanted effect: high water and electricity consumption

When we talk about artificial intelligence, we think of a digital, abstract, ethereal entity. However, this technology also has a physical side. Or, at the very least, its activity requires hardware whose operation requires a high resource cost, especially energy.

Data centers house large servers, whose operation requires a high consumption of energy and water.

UNESCO recently released a report warning of the danger of the exponential growth in energy consumption required by AI data centers to carry out their work, something that, in the words of this United Nations agency, «is putting increasing pressure on global energy systems and critical water and mineral resources.»

How much energy does this technology consume? According to the agency, each interaction we have with AI (from requesting a detailed report on the evolution of the CPI in our country to a simple «thank you» to a ChatGPT response) consumes an average of 0.34 watts per hour, which translates to 310 gigawatts per year .

It may seem small to some industries, but it’s equivalent to the annual electricity consumption of more than three million people in a low-income country. The most alarming aspect of this case isn’t the raw data, but rather its estimation. The energy demand associated with AI doubles every 100 days, further straining the stability of electrical grids.

As if that weren’t enough, in addition to electricity consumption, there is another scarce resource that this technology consumes in abundance: water.

According to UNESCO, the high demand for water not only represents a serious environmental problem, but also a challenge in terms of resource allocation. «In a context of resource scarcity, the expansion of AI infrastructure directly competes with critical societal needs,» the report warns.

The United Nations agency not only puts the high energy and resource consumption in black and white, but also makes a series of recommendations for users of these technologies.

Thanking chatgpt only makes it waste more electricity.

For example, there’s no need to ask long questions or give overly long prompts . Just as a longer text isn’t always better, an overly elaborate question won’t always lead to a more accurate answer. Generative AI learning depends on multiple factors (by the way, thanking a chatbot is of little use . It won’t be nicer to the user or treat them better. It will only consume more resources).

What does the future hold? From hyperpersonalization to autonomous agents

It’s no secret that AI is evolving at breakneck speed. Just five years ago, few of us could have imagined that this disruptive technology would be capable of accurately summarizing scientific articles or generating realistic images that look almost like real photographs. Nor would we have imagined that it could perfectly copy a work of art or automatically answer our emails .

Today, this technology is fully embedded in our daily lives. We have it integrated into our mobile phones, even though many of us don’t use it or haven’t even realized it. We encounter it every time we use a messaging service like WhatsApp or when we search on Google. What’s next?

Manuela Delgado anticipates that in the coming years we will see an explosion of hyperpersonalization . AI systems will be able to adapt content, services, and experiences to our emotions, habits, and preferences in real time. They will understand all our tastes and needs . All of this, combined with voice recognition, will allow us to fully integrate it into our daily lives. » We will even be able to buy a plane ticket just by saying it out loud ,» she says.

This will be possible thanks to autonomous agents , systems that not only execute orders but also make complex decisions on their own. What implications will this have for our autonomy as users? How will transparency in their decisions be guaranteed? These are some of the questions we will need to answer sooner rather than later.

According to Manuela Delgado, we will soon have to make important decisions. For example, how to deal with some of the problems we experience in society. “Among other things , we will have to decide how we deal with grief after the death of a loved one . We can deal with it as we have until now… or choose to continue talking to that person, as AI will be able to simulate their voice, keeping it alive virtually.”

When I finished writing these lines, the word processing assistant I used asked me if I wanted it to summarize the document or generate an image based on the article. I opted for the latter, and it returned a somewhat cluttered illustration with the phrase (AI, you know that’s more than ChatGPT?).

I must admit that generative AI has hit the nail on the head with the fundamental point of the matter, although, as Lluís Nacenta pointed out, “it is a rather mediocre artist.”

Before shutting down my computer, I check Discover. Google shows me a news story about a rock band called Velvet Sundown with more than 850,000 monthly listeners on Spotify. Quite a feat for a new band, except for one «small» detail: its members don’t exist, at least not in the flesh, as it’s an AI-generated band. I wonder if they’ll tour

National Geographic News. translated into English